I spent last week at Web Science 2013 in Paris. And what a well spent time that was. Web Science was for sure the most diverse conference I have ever attended. One of the reasons for this diversity is that Webscience was collocated with CHI (Human-Computer-Interaction) and Hypertext. But most importantly, the community of Webscience itself is very diverse. There were more than 300 participants from a wide array of disciplines. The conference spanned talks from philosophy to computer science (and everything in-between) with keynotes by Cory Doctorow and Vint Cerf. This resulted in many insightful discussions, looking at the web from a multitude of angles. I really enjoyed the wide variety of talks.

Nevertheless, there were some talks that failed to resonate with the audience. It seems to me that this was mostly due to the fact that they were too rooted in a single discipline. Some presenters assumed a common understanding of the problem discussed and used a lot of domain-specific vocabulary that made it hard to follow the talk. Don’t get me wrong: most presenters tried to appeal to the whole audience but with some subjects this seemed to be impossible.

To me, this shows that a better insight is needed on what Web Science actually is and more discussion on what should be researched under this banner. There seems to be a certain uncertainty about this, which was also reflected in the peer reviews. Hugh Davis, the general chair for Websci’13, highlighted this in his opening speech:

I think that Web Science is a good example where Open Peer Review could contribute to a common understanding and a better communication among the actors involved. I have been critical of open processes in the past because they take away the benefits of blinding. Mark Bernstein, the program chair, also stressed this point in a tweet:

Nowadays, however, I think that the potential benefits of open peer review (transparency, increased communication, incentives to write better reviews) outweigh the effects of taking away the anonymity of reviewers. Science will always be influenced by power structures, but with open peer review they are at least visible. Don’t get me wrong: I really like the inclusive approach to Web Science that the organizers have taken. The web cannot be understood with the paradigm of a single discipline, and at this very point in time it is very valuable to get input from all sides on the discussion. In my opinion, open peer review could help in facilitating this discussion before and after the conference as well.

Contributions

I made two contributions to this year’s Web Science conference. First, I presented a paper written together with Sebastian Dennerlein in the Social Theory for Web Science Workshop entitled “Towards a Model of Interdisciplinary Teamwork for Web Science: What can Social Theory Contribute?”. In this position paper, we argue that social scientists and computer scientists do not work together in an interdisciplinary way due to a fundamentally different approach to research. We sketch a model of interdisciplinary teamwork in order to overcome this problem. The feedback on this talk was very interesting. On the one hand participants could relate to the problem, but on the other hand they alerted us of many other influences to interdisciplinary teamwork. For one, there is often a disagreement at the very beginning of a research project about what the problem actually is. Furthermore, the disciplines are fragmented as well and have often different paradigms that they follow. We will consider this feedback when specifying the formal model. You can find the paper here and the slides of my talk below.

In general, the workshop was very well attended and there was a certain sense of common understanding regarding opportunities and challenges of applying social theory in web science. All in all, I think that a community has been established that could produce interesting results in the future.

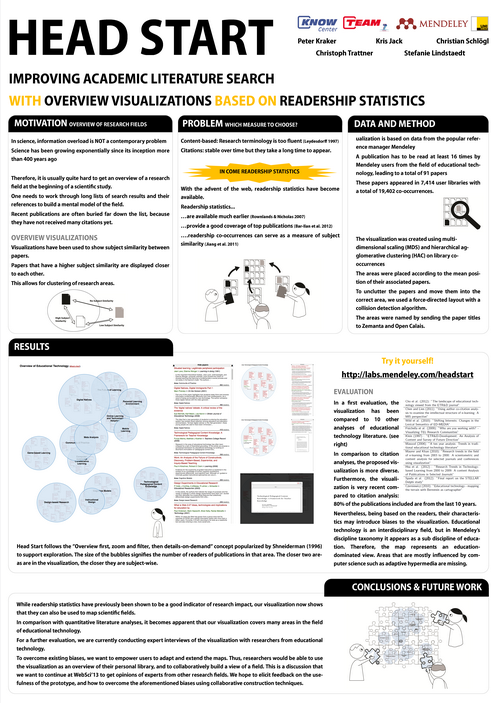

My second contribution was a poster with the title “Head Start: Improving Academic Literature Search with Overview Visualizations based on Readership Statistics” which I co-wrote with Kris Jack, Christian Schlögl, Christoph Trattner, and Stefanie Lindstaedt. As you may recall, Head Start is an interactive visualization of the research field of Educational Technology based on co-readership structures. Head Start was received very positively. Many participants were interested in the idea of readership statistics for mapping. There were some scientometrists but also educational technologists who expressed their interest. Many comments went towards how the prototype could be extended. You can find the paper at the end of the post and the poster below.

Several participants noted that they would like to adapt and extend the visualization. Clare Hooper for example is working on a content-based representation of the field of Web Science, and it would be interesting to combine our approaches. This encouraged me even more to open source the software as soon as possible.

All in all, it was a very enjoyable conference. I also like the way that the organizers innovate in the format every year. The pecha kucha session worked especially well in my opinion, sporting concise and entertaining talks throughout. Thanks to all organizers, speakers and participants for making this conference such a nice event!

Citation

Peter Kraker, Kris Jack, Christian Schlögl, Christoph Trattner, & Stefanie Lindstaedt (2013). Head Start: Improving Academic Literature Search with Overview Visualizations based on Readership Statistics Web Science 2013

Note: This is a reblog from the

Note: This is a reblog from the